MusicLM is Google’s AI model designed for generating high-fidelity music from text descriptions

Discover the Future of Music Creation with MusicLM

MusicLM is a groundbreaking AI model designed for generating high-fidelity music from text descriptions. The model leverages hierarchical sequence-to-sequence modeling to create consistent music at 24 kHz over several minutes. MusicLM can condition the generated music on both text and melody, transforming whistled and hummed melodies based on the style described in a text caption. Capable of generating music from various sources, such as painting descriptions, instruments, genres, musician experience levels, places, and epochs, MusicLM offers diverse and accurate audio outputs.

Features: Explore the Capabilities of MusicLM

MusicLM boasts a range of features that set it apart from other music generation models:

- Hierarchical Sequence-to-Sequence Modeling: MusicLM utilizes this advanced modeling technique to create high-quality music at 24 kHz, which remains consistent over extended durations.

- Conditional Music Generation: The model can be conditioned on both text and melody inputs, allowing it to generate music that adheres to text descriptions while incorporating whistled or hummed melodies.

- Diverse and Accurate Outputs: It can generate music based on various parameters, such as instruments, genres, styles, epochs, places, and musicians’ experience levels.

- MusicCaps Dataset: Google has released the MusicCaps dataset, a collection of 5,500 music-text pairs, to support future research in music generation.

Use Cases: Unleash Your Creativity with MusicLM

MusicLM offers a wide range of applications for individuals and industries alike. Some practical use cases include:

- Songwriting: Composers and songwriters can generate original, high-quality music based on text descriptions, transforming their creative ideas into reality.

- Melody Transformation: Musicians can experiment with different styles by providing text descriptions and whistled or hummed melodies as input, allowing MusicLM to generate music that matches the described style.

- Film and Video Production: Filmmakers and video producers can create custom-generated music tailored to their projects’ themes, enhancing the overall viewing experience.

- Podcasts and Presentations: Podcasters and presenters can produce unique background music to complement their content and engage their audiences.

- Research: With the publicly available MusicCaps dataset, researchers can advance the field of music generation and explore new possibilities in AI-generated music.

Pricing

As of now, MusicLM is not yet publicly available, and its pricing plans have not been announced. However, the release of the MusicCaps dataset provides researchers and interested users with a valuable resource for gaining insights into the generative process and the future of AI-generated music.

Conclusion

It represents a significant advancement in the field of AI-generated music, offering high-quality and diverse music outputs based on text descriptions and melody inputs. Its innovative features and potential use cases make it a valuable tool for various industries and creative individuals. While the model is not yet publicly available, the release of the MusicCaps dataset demonstrates the potential for further research and development in this area. It earns a 4 out of 5-star rating, with the missing star attributed to the current unavailability of the model and the lack of pricing information. Once released, It could revolutionize the way we produce and interact with music.

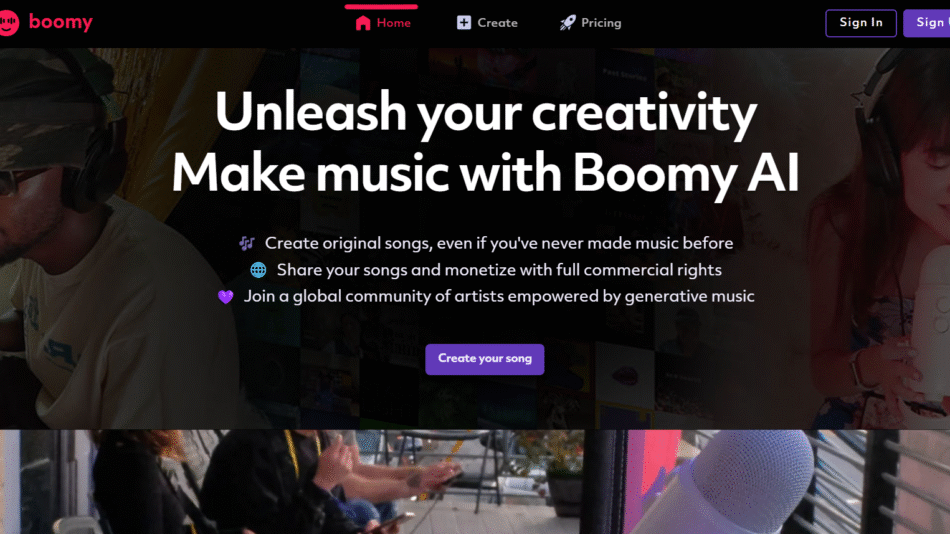

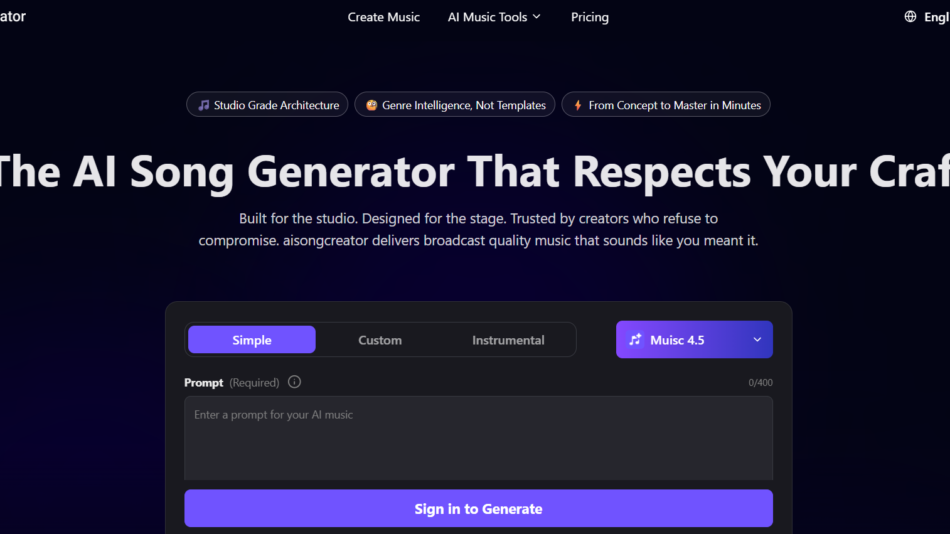

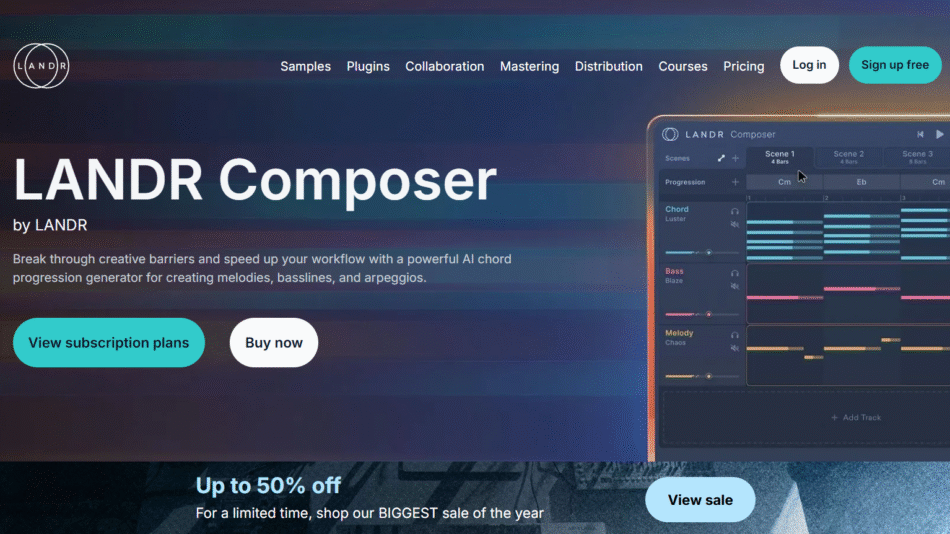

Check other AI music tools here