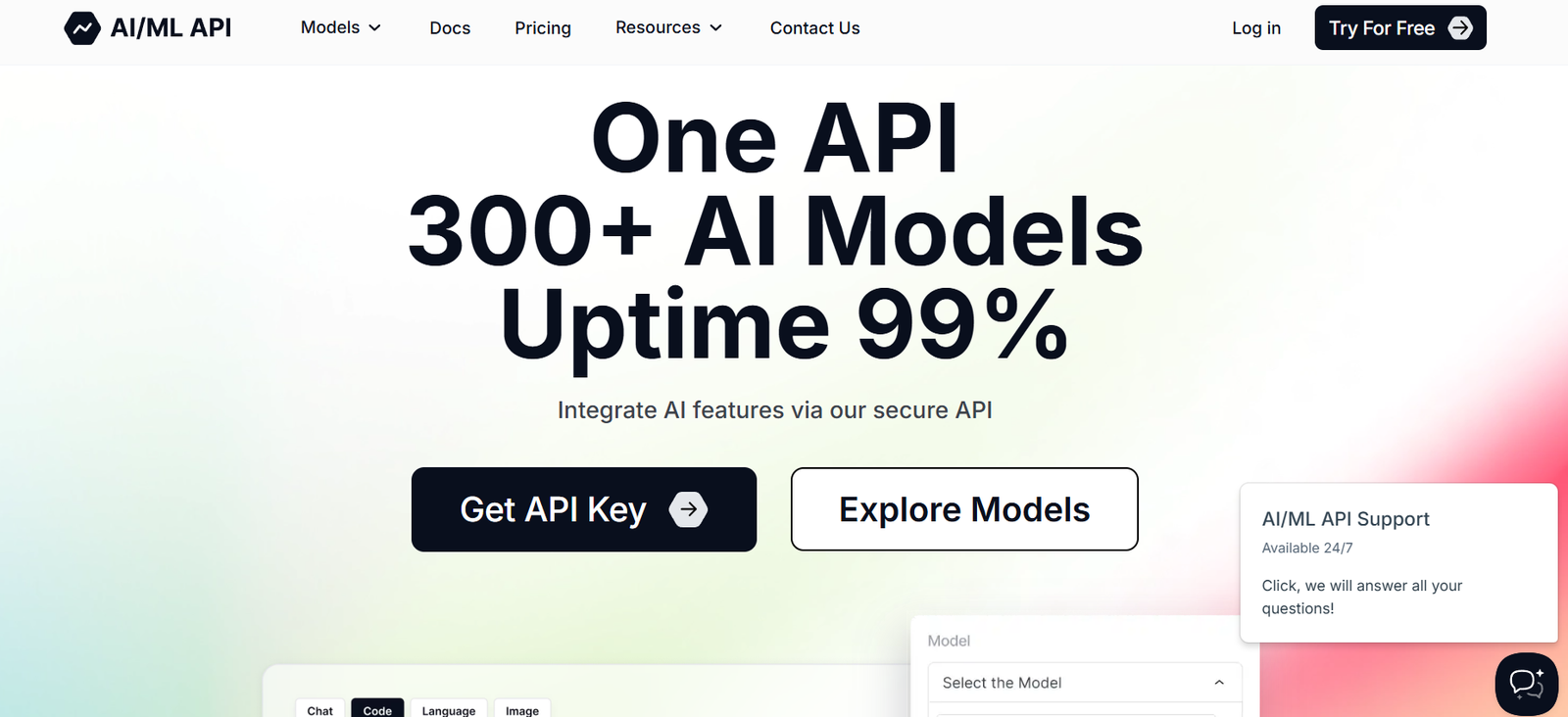

AIMLAPI is a developer-focused platform that offers simple and reliable API access to open-source large language models (LLMs) such as Mistral, LLaMA 2, and other GGUF-compatible models. Designed to serve startups, developers, researchers, and indie hackers, AIMLAPI aims to make LLMs as accessible and affordable as possible—without requiring users to manage their own infrastructure or understand complex model deployment pipelines.

By abstracting the technical complexities of hosting and running models, AIMLAPI enables developers to focus on building AI-powered applications, not managing servers or GPUs. Whether you’re building a chatbot, summarizer, code assistant, or a generative AI app, AIMLAPI offers a scalable and secure solution for integrating LLMs via simple API calls.

Features

AIMLAPI includes several features designed to streamline API access to powerful open-source models:

Fast API Access to Multiple Models

Get started instantly with access to top-performing models like Mistral 7B, LLaMA 2, Nous Hermes, and more.Simple REST API Interface

Send requests using standard HTTP methods—no special SDK or complex authentication required.High-Speed Inference

Optimized for low-latency response times, suitable for real-time applications.Rate Limits for Control and Scaling

Developers can monitor usage with transparent rate limiting to support scaling and cost management.Secure & Private

All communication is encrypted via HTTPS, and no user data is used for training or storage.Global Infrastructure

Deployments are hosted on powerful cloud infrastructure optimized for LLMs, ensuring stability and uptime.Instant API Key Access

Get an API key and start testing within minutes—ideal for prototyping and fast iteration.Pay-As-You-Go Pricing

Only pay for what you use. AIMLAPI offers clear, affordable pricing for hobbyists and enterprise use alike.

How It Works

AIMLAPI is designed to get you up and running quickly with minimal setup. Here’s how it works:

Sign Up and Get API Key

Visit https://aimlapi.com, register your account, and instantly receive an API key.Choose a Model

Select from a list of supported LLMs like Mistral, Nous Hermes, or LLaMA.Send API Requests

Use the provided endpoint to send a prompt and receive generated text in response. The request includes your model, temperature, max tokens, and other optional parameters.Review Responses and Iterate

Quickly receive the model’s response, review output, and adjust your prompt or parameters as needed.Manage Usage and Billing

Track API usage from the dashboard and manage your credits with full transparency.

Use Cases

AIMLAPI is suitable for a wide range of applications where fast, affordable LLM access is needed:

AI Chatbots and Virtual Assistants

Embed LLM-powered chat interfaces in your product with low-latency responses.Summarization and Text Analysis

Use models to summarize long documents, extract keywords, or analyze sentiment.Content Generation Tools

Build tools for automated email writing, social media posts, product descriptions, etc.Code Completion and Debugging

Leverage code-tuned LLMs for autocomplete, refactoring, or commenting tools.Educational Apps

Create AI tutors or explainers using language models to help users understand complex subjects.Prototyping and MVP Development

Use AIMLAPI to quickly validate AI product ideas without large upfront costs or infrastructure.

Pricing

AIMLAPI uses a pay-as-you-go pricing model based on the number of tokens processed per request. As of the latest information from the official website:

Current Pricing

$0.50 per 1 million tokens

No monthly subscription required

No hidden fees

Pay only for what you use

This pricing is among the most affordable in the industry, making AIMLAPI highly accessible for indie developers and startups.

Users can also monitor usage in real-time and set usage limits to control spending. Bulk usage or enterprise-level access may be eligible for custom pricing by contacting the team directly.

Strengths

AIMLAPI offers several compelling advantages for developers seeking LLM access without infrastructure hassles:

Developer-Centric Design

The API is built with simplicity and speed in mind—ideal for developers who want to plug in LLMs without managing GPUs.Affordable Token Pricing

$0.50 per million tokens is extremely cost-effective, especially for early-stage or experimental projects.No Lock-In to Closed Models

Access open-source models without relying on closed platforms like OpenAI or Anthropic.No Infrastructure Required

No servers, Docker containers, or GPU provisioning—just an API key and an endpoint.Rapid Prototyping Capability

Get a working proof of concept with AI in hours, not days or weeks.Secure and Compliant

HTTPS-secured API calls and no data retention by default.

Drawbacks

As a lean platform focused on simplicity, AIMLAPI may not cover every enterprise-level need:

No UI Interface or Playground (as of now)

Testing is done via API only; there is no built-in GUI for prompt engineering or model interaction.Limited Customization

Cannot fine-tune or upload your own models—only inference from pre-hosted models is supported.Fewer Models than Larger Providers

Currently offers access to select open-source models. No GPT-4 or Claude-level models.Token Management May Require Monitoring

With pay-as-you-go pricing, users must monitor token usage to avoid surprise costs.Lacks SDKs or Integrations (Currently)

Does not yet offer official client libraries for Python, JavaScript, etc.

Comparison with Other Tools

AIMLAPI fills a unique niche between local LLMs and enterprise cloud platforms:

Versus OpenAI API

OpenAI offers GPT-3.5/4 but is more expensive and requires more compliance overhead. AIMLAPI is cheaper, simpler, and based on open-source models.Versus Hugging Face Inference API

Hugging Face supports many models, but pricing can be higher and may require setup. AIMLAPI is optimized for speed and affordability.Versus Ollama or LM Studio

Those tools are for local model inference, while AIMLAPI is for hosted access via API—no local resource consumption needed.Versus Together.ai or Anyscale

AIMLAPI is simpler, more developer-friendly, and currently offers better per-token pricing.

Customer Reviews and Community Feedback

Although relatively new, AIMLAPI has started gaining recognition among indie devs, open-source AI enthusiasts, and builders on platforms like X (formerly Twitter) and GitHub.

Early adopters have praised:

“Unbelievably fast setup. Had my AI app working in an hour.”

“Great pricing for bootstrappers like me.”

“Finally an LLM API that doesn’t require a cloud engineer to use.”

“Mistral 7B running smooth and fast. Good latency.”

Developers appreciate its minimalistic, no-nonsense approach to LLM integration—especially for small teams and prototypes.

Conclusion

AIMLAPI offers one of the most accessible and affordable ways to interact with open-source LLMs via API. Whether you’re an indie hacker building an AI chatbot, a startup validating an idea, or a developer prototyping a generative tool, AIMLAPI makes it simple to plug into powerful models without infrastructure or high costs.

With flat pricing, fast response times, and a developer-first experience, it’s quickly becoming a go-to solution for lightweight AI integration. If you’re seeking an easy, fast, and scalable way to work with LLMs, AIMLAPI is well worth trying.