Toqan AI is an enterprise-grade platform designed to help organizations securely build, deploy, and manage custom large language models (LLMs). Built for regulated industries and companies with strict data privacy requirements, Toqan AI offers a fully modular and secure infrastructure stack tailored for responsible AI use.

The platform supports LLM fine-tuning, deployment, observability, and policy management—all within environments that prioritize compliance, performance, and security. Whether running on-premises, in private cloud, or hybrid setups, Toqan AI gives organizations full control over their AI stack while enabling them to develop bespoke AI solutions that meet their unique operational and regulatory needs.

Toqan’s infrastructure-first approach makes it ideal for enterprises that need sovereign, scalable, and trustworthy generative AI capabilities.

Features

Toqan AI offers a comprehensive feature set that supports the entire lifecycle of LLM deployment in enterprise environments:

Secure Model Hosting

Host and serve open-source or proprietary LLMs with full isolation, encryption, and data residency compliance.Modular AI Infrastructure

Choose and integrate components like vector databases, APIs, orchestration layers, and observability tools in a plug-and-play architecture.Custom Fine-Tuning Support

Train or fine-tune models using private data, ensuring models align with internal knowledge and remain fully compliant with industry requirements.Policy and Access Controls

Set fine-grained permissions, usage restrictions, and governance policies for LLM interactions across teams and applications.Real-Time Monitoring and Observability

Track model usage, performance, token consumption, latency, and error rates with built-in dashboards and logging.Multi-Modal Model Support

Run not only language models but also multimodal models capable of processing text, code, and potentially other input types like images.Deployment Flexibility

Deploy LLMs on your infrastructure—whether on-premise, in VPC environments, or private cloud—ensuring total ownership and control.Developer and API-Friendly

Comes with APIs, SDKs, and CLI tools to integrate seamlessly with development pipelines, backend systems, and enterprise applications.

How It Works

Set Up the Infrastructure

Choose a deployment model (on-prem, hybrid, private cloud) and install Toqan’s core modules, including LLM runners, orchestrators, and observability layers.Upload or Choose Models

Load open-source LLMs (e.g., LLaMA, Mistral, Falcon) or bring your own fine-tuned models. Toqan supports a variety of model architectures.Customize and Fine-Tune

Fine-tune models using internal datasets or domain-specific knowledge, ensuring the outputs align with business language, tone, and constraints.Enforce Policies and Monitor

Define governance rules and enable monitoring to track usage, detect anomalies, and ensure responsible AI behavior.Integrate with Enterprise Tools

Use APIs or SDKs to connect your custom LLMs to enterprise software, internal apps, and digital services.

Use Cases

Toqan AI supports a wide variety of enterprise and government use cases where data security, compliance, and control are mission-critical:

Financial Services

Build LLM-based assistants that summarize financial documents, support compliance reviews, or automate customer interactions—within private infrastructure.Healthcare and Life Sciences

Fine-tune models on sensitive patient data and deploy in HIPAA-compliant environments for use in clinical decision support or documentation.Legal and Compliance Teams

Use Toqan to develop internal knowledge retrieval tools and contract summarization models that never leave your secure network.Public Sector and Defense

Deploy sovereign AI infrastructure for secure, offline, or air-gapped environments in national defense or public administration.Enterprise Knowledge Management

Integrate secure LLMs with internal documentation, wikis, and communication tools to build custom chatbots or knowledge assistants.SaaS Product Teams

Offer LLM capabilities within your products without sending data to third-party APIs, ensuring user privacy and brand consistency.

Pricing

Toqan AI does not list pricing information publicly. The platform follows a custom pricing model, which is tailored based on:

Number of models and deployment scale

Type of hosting (on-premise, hybrid, cloud)

Required modules (e.g., observability, governance, fine-tuning)

Volume of usage (tokens, queries)

Support level and SLAs

Interested organizations can request a demo and receive a customized quote by contacting the Toqan AI team via https://toqan.ai.

Strengths

Built specifically for secure, enterprise-scale LLM deployment

Modular infrastructure allows full customization

Enables full control over data, policies, and compliance

On-premise and private cloud-ready

API-friendly for integration into enterprise systems

Supports open-source and proprietary models

Drawbacks

No fixed or transparent pricing available

May require DevOps and IT support to implement at scale

Designed for enterprise teams—not intended for individual developers or small startups

Some advanced features may require onboarding assistance

Comparison with Other Tools

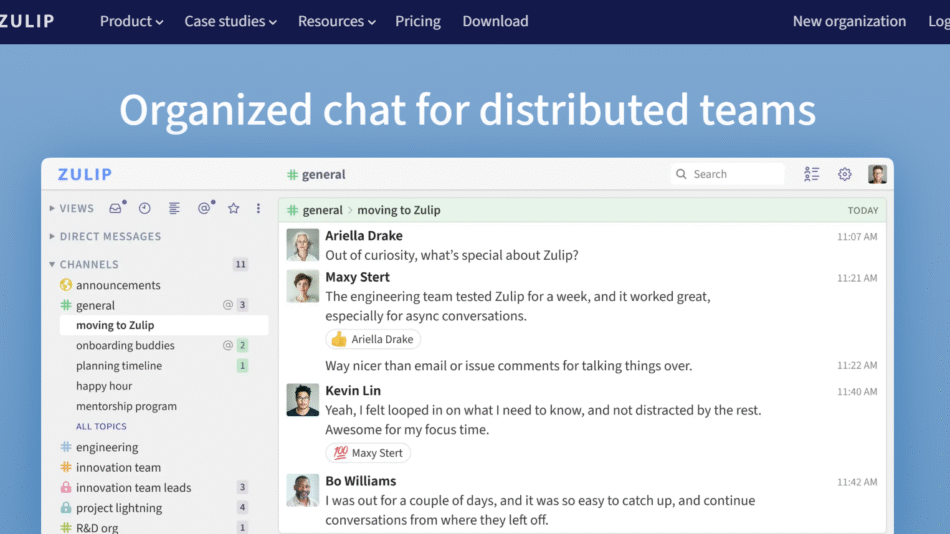

Toqan AI competes in the same space as platforms like OpenLLM, Predibase, or Hugging Face Inference Endpoints, but it is more focused on infrastructure, compliance, and enterprise deployment.

Unlike API-first services such as OpenAI or Anthropic, Toqan AI is built for organizations that need to own and operate their own AI stack, not depend on external black-box models.

Its emphasis on secure hosting, modularity, and real-time observability makes it a standout option for CIOs, CTOs, and MLOps leaders who need to balance innovation with control and compliance.

Customer Reviews and Testimonials

Toqan AI is a rapidly growing company, with early adoption in finance, healthcare, government, and enterprise SaaS sectors. While detailed public testimonials are not yet listed, the platform’s positioning and use cases reflect strong traction among teams looking for private, compliant alternatives to public AI services.

Prospective users are encouraged to request demo sessions and case studies directly from the company through its contact form.

Conclusion

As enterprises move from AI experimentation to deployment at scale, Toqan AI delivers the infrastructure they need to do it securely, reliably, and responsibly. Its modular platform helps businesses take full ownership of their LLMs, enabling use cases that would otherwise be too risky or too complex to manage via third-party APIs.

With support for fine-tuning, governance, observability, and custom deployment, Toqan AI empowers organizations to build trustworthy generative AI systems on their own terms.